CommunityData:Wikia data

XML Dumps[edit]

So far our Wikia projects all use the XML dumps. These are stored on hyak at /gscratch/comdata/raw_data/wikia_dumps.

Use the 2010 dumps[edit]

The 2010 wikia dumps were created by wikia and are the only ones that approach being complete or reliable. They include the full history of ~76K wikis. In general, if the questions you want to answer don't require newer data then use the 2010 dumps.

- While the process of creating the dumps is somewhat opaque, it appears that most of the dumps were created in early January 2010. The last dumps were created in early April 2010.

The next most useful dumps are from WikiTeam and were obtained from archive.org. As of 5-23-2017, the most recent complete dumps [1] from Wikiteam were from December 2014. Mako found some missing data in these dumps and contacted them. They released a patch [2], which we have yet to validate. Another release exists from 2020 [3], which is the most current as of 1-31-2021.

These, and all other dumps after 2010, have a few issues that make using them complicated:

- Wikis that never gain traction are deleted from the DB. The criteria for which wikis get deleted is not clear.

- There have been a number of technical changes to the site since 2010, such as forums, message walls, etc.

- Other dumps were created via various processes (e.g., through the API) and not by Wikia and have missing data, truncated data, etc.

Knowing if a dump is valid[edit]

The most common problem with dumps is to be truncated. Sometimes some tag does not close and xpat based parsers like python-mediawiki-utils / wikiq will fail. Commonly dumps are truncated after a revision or page for some unknown reason. We assume that an xml dump is an accurate representation of the wiki if it has opening and closing <mediawiki> tags and is valid xml. Sometimes wikia dumps have funny quirks (e.g., they put SHA1s in weird places). We just have to work around these. Consider including and surfacing such fields when building tools for working with mediawiki data so that language objects accurately reflect underlying data.

Nate and Salt are working on tools to help with this.

Obtaining fresh dumps[edit]

If wikiteam data doesn't suit your needs you probably need to get a dump yourself. The first thing to try is to download it straight from Wikia on the special:statistics page. Note that you need to be logged in to do this.

- Visit the special:statistics page of the wiki you want to download. e.g. http://althistory.wikia.com/wiki/Special:Statistics

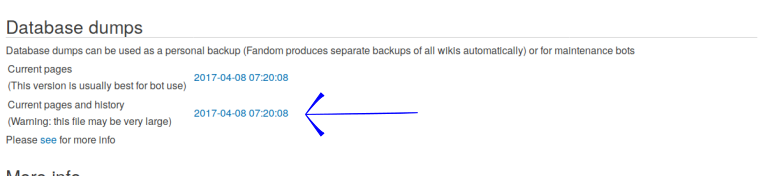

- Click the link ( it's the timestamp) for "current pages and history:

If this is out of date or doesn't exist then you will have to request a new dump. There is currently not an easy way for non-admins to request new dumps. You will probably need to contact Wikia.

Another option, if you don't have too many wikis that you need dumps for, is to get them from the api[4]. This will take a long time for big wikis or a lot of wikis, so do whatever work you can to minimize the list of wikis you need beforehand.